The generative video race is heating up in China is profiled by BTW Media because published evidence links it to internet infrastructure, governance, operational dependencies, or market visibility.

The generative video race is heating up in China is tracked as a internet infrastructure institution within the internet infrastructure ecosystem.

The generative video race is heating up in China has public-source relevance to network operations, governance, dependency mapping, or market structure.

The generative video race is heating up in China has public-source relevance to network operations, governance, dependency mapping, or market structure.

The generative video race is heating up in China is tracked as a internet infrastructure institution within the internet infrastructure ecosystem.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

The generative video race is heating up in China is profiled by BTW Media because published evidence links it to internet infrastructure, governance, operational dependencies, or market visibility.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

| 0.90–1.00 | A | High — direct sources |

| 0.75–0.89 | A/B | Strong |

| 0.55–0.74 | B/C | Medium |

| 0.35–0.54 | C/D | Weak–medium |

| 0.10–0.34 | D | Weak signal |

| 0.00–0.09 | D | Internal monitoring |

Several public sources

- Tencent has released a new version of its open-source video generation model, DynamiCrafter, on GitHub.

- This update focuses primarily on animating natural scenes with stochastic dynamics (such as clouds and fluids) or domain-specific motion.”

- After generating text and images, generating video is expected to be the next focus of the artificial intelligence race.

Some of China’s biggest tech companies have been quietly ramping up efforts to gain a foothold in the text-and-image-to-video space. On February 5, Chinese Internet giant Tencent, best known for its video game empire and chat app wechat, released a new version of its open source video generation model DynamiCrafter on GitHub.

How it works

Like other video generation tools on the market, DynamiCrafter uses a diffusion method to convert captions and still images into videos that are a few seconds long. Inspired by natural diffusion phenomena in physics, diffusion models in machine learning can transform simple data into more complex and realistic data, similar to how particles move from one region of high concentration to another of low concentration.

Also read: Google’s AI-powered search now generates images

The second generation of DynamiCrafter is producing video at 640 x 1024 pixel resolution, an upgrade from the 320 x 512 video that was first released in October. An academic paper published by the team behind DynamiCrafter notes that its technique differs from its competitors in that it expands the applicability of image animation techniques to “more general visual content.”

“The key idea is to exploit the motion priors of text-to-video diffusion models by incorporating images into the generation process as a guide,” the paper says. In contrast, “traditional” techniques “mainly focus on animating natural scenes with random dynamics (e.g., clouds and fluids) or domain-specific motion (e.g., human hair or body motion).”

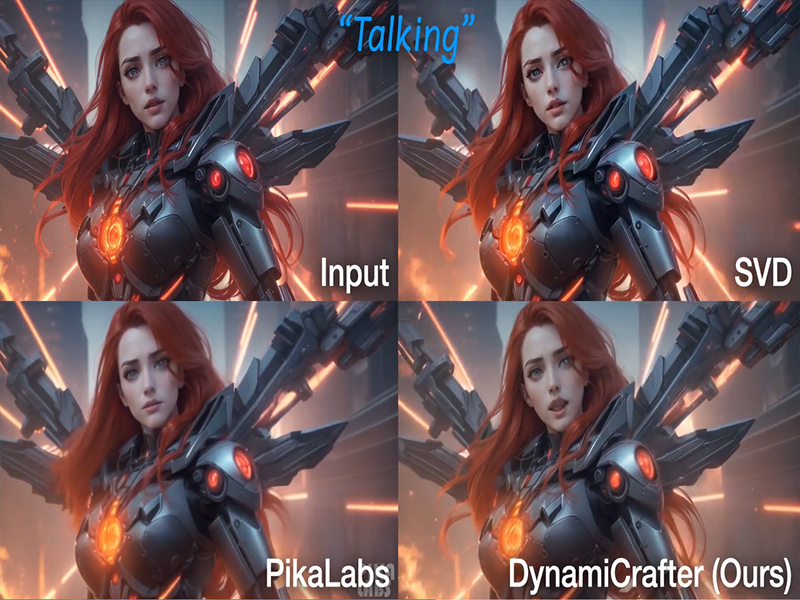

In a demo comparing DynamiCrafter, Stable Video Diffusion(released in November), and the recently hyped Pika Labs, the results of Tencent’s model seemed more robust than the others. Inevitably, the sample chosen will lean towards DynamiCrafter.”

A new no-gun war between companies

It is undeniable that after generating text and images, generating video is expected to be the next focus of the artificial intelligence race. As a result, startups and tech companies are expected to pour significant resources into this space. China is no exception.In addition to Tencent and TikTok’s parent Bytedance, Baidu and Alibaba have also released their own video diffusion models.

Both ByteDance’s MagicVideo and Baidu’s UniVG have posted demos on GitHub, but neither appears to be available to the public. Like Tencent, Alibaba has open sourced its video generation model, VGen, a strategy that has become increasingly popular among Chinese tech companies looking to reach a global developer community.

At A Glance

- Name: The generative video race is heating up in China

- Type: Internet infrastructure institution

- Base: Asia Pacific

- Profile focus: Institution

What It Does

- Public records support monitoring of its role, services, and key relationships.

Why It Matters

- Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

- Operational criticality: Medium

- Time horizon: Next quarter

What To Watch

- Monitoring focuses on verified service continuity, governance changes, and relationship signals.

Track verified source updates, role changes, and current public evidence.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

Longer-term relevance depends on verified operating, policy, and relationship changes.

Member Briefing

Deeper Profile Context

Login is required to unlock the full profile briefing and source notes.

Only for Strategy Circle

Strategic Circle Access

Open to all readers. Unlock profile briefings after joining and logging in.

Join Strategic CircleOnly for Leadership Alliance

Leadership Alliance Access

For owners and management of IP-holding companies. Login required to unlock.

Join Leadership Alliance