Tesla is profiled by BTW Media because published evidence links it to internet infrastructure, governance, operational dependencies, or market visibility.

Tesla is tracked as a network infrastructure operator within the internet infrastructure ecosystem.

Tesla has public-source relevance to network operations, governance, dependency mapping, or market structure.

Tesla has public-source relevance to network operations, governance, dependency mapping, or market structure.

Tesla is tracked as a network infrastructure operator within the internet infrastructure ecosystem.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

Tesla is profiled by BTW Media because published evidence links it to internet infrastructure, governance, operational dependencies, or market visibility.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

| 0.90–1.00 | A | High — direct sources |

| 0.75–0.89 | A/B | Strong |

| 0.55–0.74 | B/C | Medium |

| 0.35–0.54 | C/D | Weak–medium |

| 0.10–0.34 | D | Weak signal |

| 0.00–0.09 | D | Internal monitoring |

Several public sources

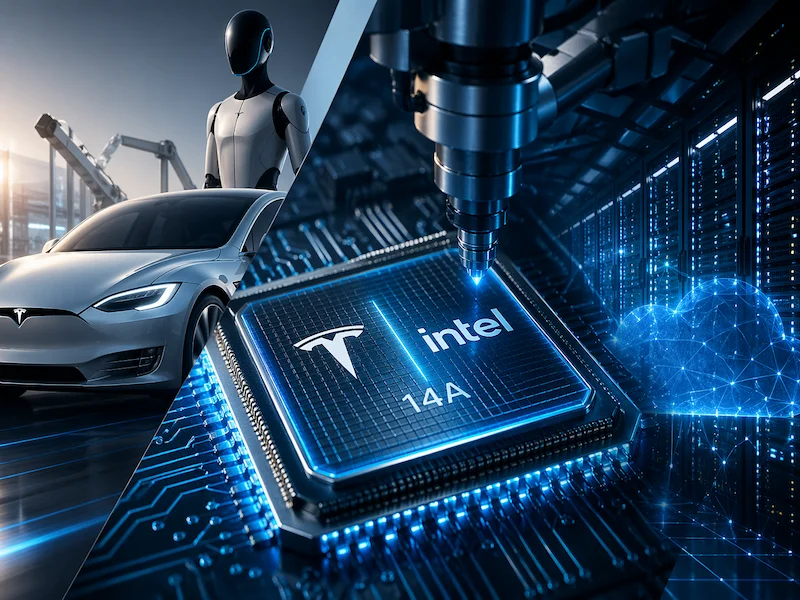

•Musk publicly tied Tesla's AI chip demand to Intel's 14A node before it enters mass production.

•The move signals early demand for Intel's 14A foundry process, which is not yet in mass production.

What happened

Tesla chief executive Elon Musk said the company plans to use Intel's 14A process technology for its Terafab AI chip programme. The statement links Tesla's internal AI hardware development work with Intel's upcoming advanced manufacturing node, which is still under development.

The Terafab project is designed around large-scale chip production capacity aimed at supporting Tesla's AI workloads. These include systems for autonomous driving, robotics, and model training. The programme is expected to rely on future semiconductor process nodes as they become available at production scale.

Intel's 14A process is part of its next-generation manufacturing roadmap and is intended for high-performance and power-efficient computing. It is not yet in mass production, and remains in the development phase.

Tesla has increasingly focused on in-house compute capabilities in recent years, particularly for AI training and inference workloads. The potential use of Intel's future process technology suggests early-stage coordination between chip design requirements and manufacturing roadmaps, rather than a confirmed production contract at scale.

Why it's important

The announcement adds an early demand signal for Intel's 14A process, even though the node is still under development. For Intel, external commitments at this stage can help validate its foundry strategy as it competes in advanced manufacturing.

For Tesla, the interest reflects its continued push to reduce reliance on external chip suppliers for AI workloads. However, the timeline for Terafab and Intel's 14A ramp remains uncertain, which means any production relationship would likely depend on technical readiness rather than immediate deployment.

From a supply chain perspective, the link between a high-volume AI workload plan and a pre-production semiconductor node highlights how closely chip roadmaps and system-level AI planning are beginning to interact. In practice, both sides are aligning expectations ahead of actual manufacturing maturity.

At this stage, the announcement functions more as a forward-looking compatibility signal than a confirmed production arrangement, with execution depending on how Intel's process development progresses and how Tesla's internal chip demand evolves.

Also read: Uber taps Amazon’s custom AI chips for ML workloads

Also read: Intel joins Musk’s TeraFab AI chip project

At A Glance

- Name: Tesla

- Type: Network infrastructure operator

- Base: Global

- Profile focus: Company

What It Does

- Public records support monitoring of its role, services, and key relationships.

Why It Matters

- Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

- Operational criticality: Medium

- Time horizon: Next quarter

What To Watch

- Monitoring focuses on verified service continuity, governance changes, and relationship signals.

Track verified source updates, role changes, and current public evidence.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

Longer-term relevance depends on verified operating, policy, and relationship changes.

Member Briefing

Deeper Profile Context

Login is required to unlock the full profile briefing and source notes.

Only for Strategy Circle

Strategic Circle Access

Open to all readers. Unlock profile briefings after joining and logging in.

Join Strategic CircleOnly for Leadership Alliance

Leadership Alliance Access

For owners and management of IP-holding companies. Login required to unlock.

Join Leadership Alliance