Exploring the depths of creativity with DALL-E 3: A new era in AI art is profiled by BTW Media because published evidence links it to internet infrastructure, governance, operational dependencies, or market visibility.

Exploring the depths of creativity with DALL-E 3: A new era in AI art is tracked as a internet infrastructure institution within the internet infrastructure ecosystem.

Exploring the depths of creativity with DALL-E 3: A new era in AI art has public-source relevance to network operations, governance, dependency mapping, or market structure.

Exploring the depths of creativity with DALL-E 3: A new era in AI art has public-source relevance to network operations, governance, dependency mapping, or market structure.

Exploring the depths of creativity with DALL-E 3: A new era in AI art is tracked as a internet infrastructure institution within the internet infrastructure ecosystem.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

Exploring the depths of creativity with DALL-E 3: A new era in AI art is profiled by BTW Media because published evidence links it to internet infrastructure, governance, operational dependencies, or market visibility.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

| 0.90–1.00 | A | High — direct sources |

| 0.75–0.89 | A/B | Strong |

| 0.55–0.74 | B/C | Medium |

| 0.35–0.54 | C/D | Weak–medium |

| 0.10–0.34 | D | Weak signal |

| 0.00–0.09 | D | Internal monitoring |

Several public sources

- DALL-E is an image generation generative AI model created by OpenAI. It was first launched in January 2021, with the latest release being its third iteration.

- There are 5 points on how to create effective prompts: clarity, creativity, style mention, composition, modifiers.

- The usage of artificial intelligence (AI)-generated art may call into question copyright, originality, and the value of human creativity.

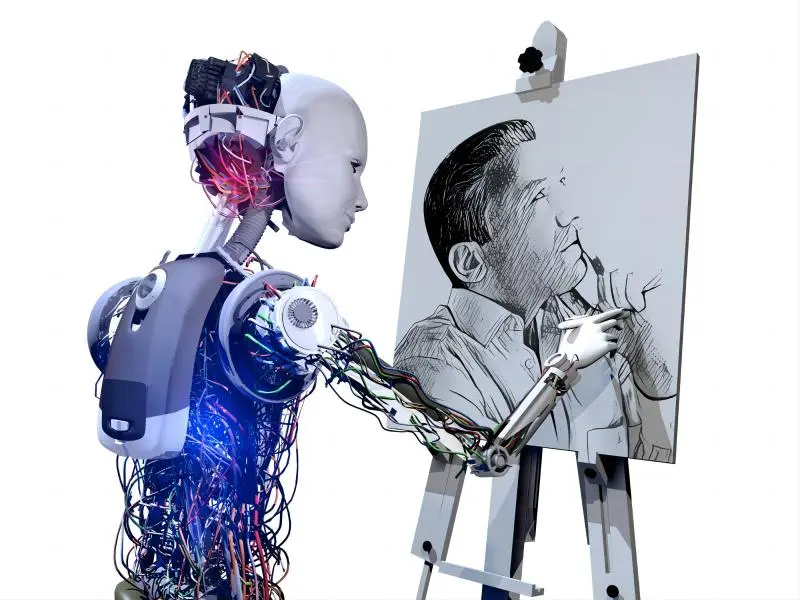

The advent of DALL-E 3, the latest iteration of the AI image generation model, has opened Pandora’s box of possibilities for artists, designers, and creatives across the globe. With its ability to understand and generate images from complex textual prompts, DALL-E 3 is not just a tool but a catalyst for a new wave of creativity. In this blog post, we will delve into the intricacies of crafting prompts for DALL-E 3, explore its capabilities, and discuss the potential impact on the art world.

What is DALL-E 3?

DALL-E 3 is a product of OpenAI, an AI research lab, and is built upon the foundation of its predecessors, DALL-E and DALL-E 2. It is a multi-layered perceptron that uses a transformer-based architecture to interpret natural language prompts and generate corresponding images. Unlike its predecessors, DALL-E 3 has been fine-tuned for more nuanced understanding and generation of images, making it a powerhouse for creative expression.

DALL-E was first launched in January 2021, with the latest release being its third iteration. As a fun fact, the name “DALL-E” was created by combining the names of Pixar’s 2008 film WALL-E and Salvador Dali, the well-known Spanish surrealist artist known for his technical prowess.

One thing DALL-E, DALL-E 2, and DALL-E 3 have in common is that they’re all text-to-image models developed using deep learning techniques that enable users to generate digital images from natural language. Other than that, there are quite a few differences. DALL-E 1 used a technology known as a Discrete Variational Auto-Encoder (dVAE). This technology was based on research conducted by Alphabet’s DeepMind division with the Vector Quantised Variational AutoEncoder. DALL-E 2 sought to generate more realistic images at high resolutions, combining concepts, attributes, and styles.

DALL-E 3 can understand “significantly more nuance and detail” than its predecessors. Namely, the model follows complex prompts with better accuracy and generates more coherent images. It also integrates into ChatGPT – another OpenAI generative AI solution.

Also read: How do autonomous vehicles work?

Crafting effective prompts

The key to unlocking the full potential of DALL-E 3 lies in the art of crafting prompts. A good prompt is not just a description but a blueprint for the AI to follow. Here are some guidelines for creating effective prompts:

Clarity: Be as clear and specific as possible. The more precise your description, the better the output.

Creativity: Push the boundaries of your imagination. DALL-E 3 can handle abstract and complex concepts.

Style mention: If you have a specific art style in mind, mention it. DALL-E 3 can emulate styles from Van Gogh to modern digital art.

Composition: Describe the layout and elements you want in the image, such as the position of subjects and the background.

Modifiers: Use words like “surreal,” “cyberpunk,” or “whimsical” to guide the tone and style of the generated image.

Also read: What is Web3 gaming?

The impact on the art world

DALL-E 3’s ability to generate images from text has profound implications for the art world. It democratises the creation of art by allowing anyone with a vivid imagination to produce high-quality images without traditional artistic skills. This could lead to a surge in innovative artwork, new forms of visual storytelling, and a redefinition of what constitutes art.

Ethical considerations

With great power comes great responsibility. The use of AI-generated art raises questions about originality, copyright, and the role of human creativity. It is crucial to establish ethical guidelines that protect the rights of artists and ensure AI is used as a tool for enhancement rather than replacement.

DALL-E 3 represents a significant leap in AI’s ability to understand and create art. It challenges our perception of creativity and opens up new avenues for artistic expression. As we stand on the cusp of this technological revolution, it is essential to embrace the potential of AI while also considering the ethical and societal implications it presents. The future of art is not just digital; it is imaginative, collaborative, and, with DALL-E 3, limitless.

At A Glance

- Name: Exploring the depths of creativity with DALL-E 3: A new era in AI art

- Type: Internet infrastructure institution

- Base: Global

- Profile focus: Institution

What It Does

- Public records support monitoring of its role, services, and key relationships.

Why It Matters

- Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

- Operational criticality: Medium

- Time horizon: Next quarter

What To Watch

- Monitoring focuses on verified service continuity, governance changes, and relationship signals.

Track verified source updates, role changes, and current public evidence.

Public-source signals support medium-impact monitoring for infrastructure visibility and dependency analysis.

Longer-term relevance depends on verified operating, policy, and relationship changes.

Member Briefing

Deeper Profile Context

Login is required to unlock the full profile briefing and source notes.

Only for Strategy Circle

Strategic Circle Access

Open to all readers. Unlock profile briefings after joining and logging in.

Join Strategic CircleOnly for Leadership Alliance

Leadership Alliance Access

For owners and management of IP-holding companies. Login required to unlock.

Join Leadership Alliance